|

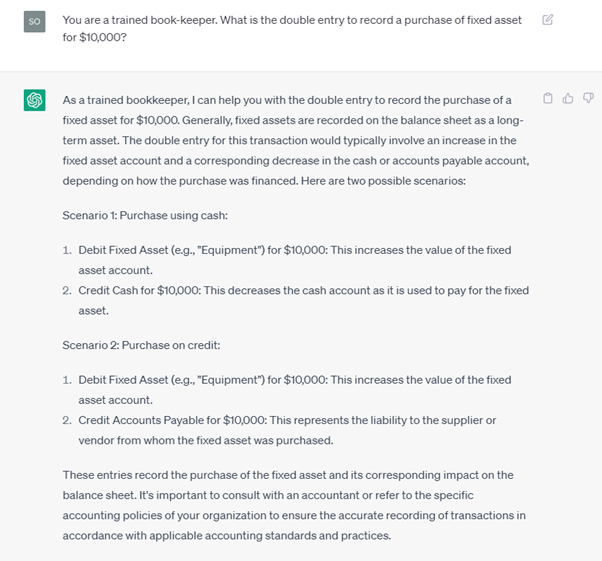

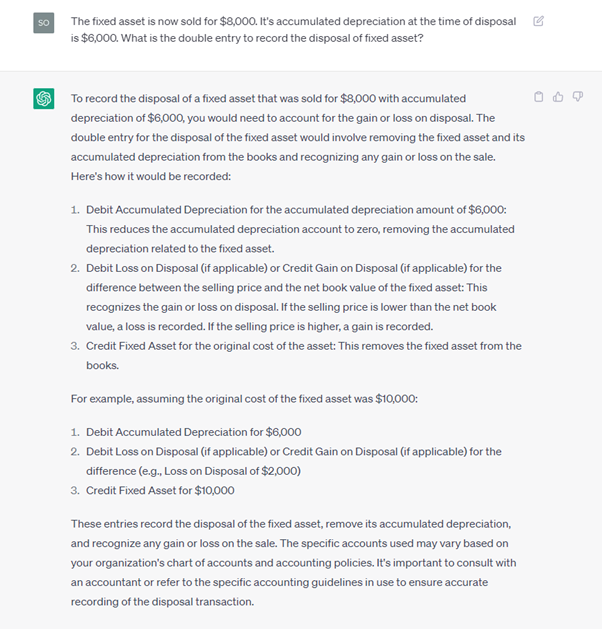

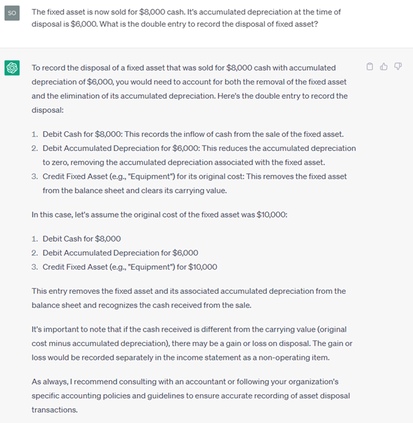

By now, I'm sure most of us would have known of Generative Pre-Trained Transformer (GPT) AIs. The AI wave is well and truly upon us and I must say, the pace of adoption and development is well beyond my initial expectations. I first started experimenting with ChatGPT in January this year. At first, I was very impressed with its cognition. So I was very excited and wanted to find out what it can or cannot do. But when I realised how smart it can be, I became a little scared. So you can say that I was excited and scared at the same time. And I mused to my friends that its like being in a new relationship. But I recognised how this was going to be a game changer and started evangelising it's usage to the people I know. So it was heartening to see at a recent event organised by my constituency, quite a number of people there have heard and are trying out GPT AIs. It has been suggested that AIs are threatening to replace certain jobs and professions. But I've opined previously where the accounting profession faced the "threat" of digitalization, cloud computing etc, that we should always look to see how technology can enable us rather than fear using it. So I did put ChatGPT through its paces to try and understand its limitations. And just like what John Oliver said in this episode of Last Week Tonight, "The problem with AI right now isn't that it's smart, it's that its stupid in ways we can't always predict" John Oliver, Last Week Tonight When GPT4 rolled out shortly after this episode, we finally caught a glimpse of one of the ways we can predict. OpenAI has acknowledged that while the AI would have a propensity to "hallucinate" its responses and answers, it would do so at a lesser frequency with GPT4. Which IMO is just a politically correct way to say it lies. The issue is, it lies quite convincingly. I've tossed some technical questions to it before and have experienced how it "hallucinates" its response after response before cratering to admit it was wrong. But I have domain knowledge so I could confidently challenge it response after response. Can the same be said for someone with no or little domain knowledge? And the fact is that its building up its capabilities more and more everyday. I'm sure you have seen reports on how it can already pass the Bar exams etc. So sooner or later, a lot of intellectual jobs would be under threat from AI. Case in point, software coding is very much disrupted by AI already since it can generate code faster than a human being. So the accounting profession is also, in its response when asked the question, very much under threat. I've decided to test the extent of this perceived threat and this is the result: So far so good for simple double entry. I then tested it further: Still spot on. Now for the ultimate test: The first query on the left omitted the information that it was sold for cash. And even with that information included in the second query on the right, the journal entries suggested is still incorrect. More importantly, these entries do not even balance. So based on this test, IMO, the profession is safe for now.

But if you look at its responses, it's quite convincing. I do doubt a person with less or minimal domain knowledge will be able to tell the difference. Possessing knowledge is thus important in not only in dealing with the possible threats from AI but also to detect misinformation from the use of AI. It's imperative for us to now gather as much knowledge as we can and maintain it. And since it is almost impossible to have domain knowledge in everything, we should learn to be skeptical in processing information to protect ourselves against misinformation in an increasingly AI-driven world. And this is why I think 2023 will be THE year the Knowledge Age starts in earnest and takes over from the Information Age. (In case you're wondering, this was not written by ChatGPT. But the images above were generated from AI) Your comment will be posted after it is approved.

Leave a Reply. |

CuratorEchtual Archives

May 2024

Categories |

实际会计师事务所有限公司

Copyright © 2016 // ECHTUAL®

5 Temasek Boulevard #17-131 Suntec Tower 5 Singapore 038985

t: +65 6513 5871

t: +65 6513 5871

RSS Feed

RSS Feed